Why Do Many Token When Few Do Trick

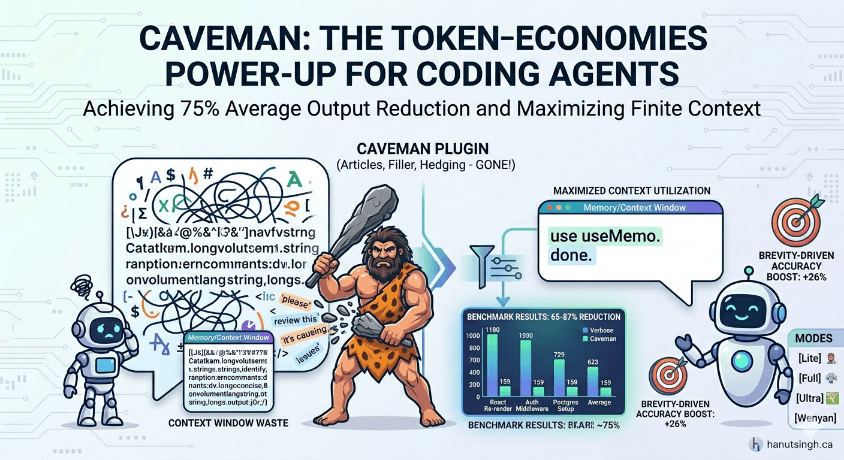

Caveman is a cross-harness plugin that strips filler from LLM output, cutting token use by up to 87 percent without losing accuracy.

Context windows lie to you. You see "200k tokens" on the box and think you have room to breathe. Then you load three files, a system prompt, a tool schema, some memory, and half your budget is already gone. Now you actually need the model to reason about something, and you are rationing.

I have been watching this problem get worse, not better, regardless of which coding harness I am in. Claude Code, Gemini CLI, Cursor, Copilot. They all share the same underlying squeeze. As models get smarter, we keep asking them to do more in a single turn. Bigger refactors. Longer traces. More files in scope at once. The ceiling moves up and we immediately build taller.

This is why a silly little tool called Caveman caught my eye.

The bit

Caveman is a plugin that tells whatever model you are driving to drop articles, filler words, hedging language, and pleasantries from its output. No "the." No "a." No "basically." No "I hope this helps." Just the signal. It ships as an installable skill across pretty much every serious coding harness out there. Claude Code, Gemini CLI, Cursor, Windsurf, Cline, Copilot, and Codex are all supported out of the box. The repo lives at https://github.com/JuliusBrussee/caveman and the README joke says it all - Why do many token when few do trick.

The framing is goofy on purpose. The underlying idea is not.

Before and after

Here is what a normal explanation of a React re-render tends to look like:

The reason your component is re-rendering on every parent update is likely because you are passing an inline object as a prop. Each render creates a new object reference, which React's shallow comparison interprets as a prop change, triggering the re-render. To fix this, you can wrap the object in useMemo so the reference stays stable across renders.

And here is the caveman version:

New object ref each render. Inline object prop = new ref = re-render. Wrap in useMemo.

Both answers are correct. One costs you roughly 60 tokens. The other costs roughly 20. Do that math across a full session and the difference stops being cute.

The numbers

The repo ships with reproducible benchmarks using real API token counts. These track with what I saw when I kicked the tires on it.

| Task | Normal | Caveman | Reduction |

|---|---|---|---|

| React re-render explanation | 1,180 | 159 | 87% |

| Auth middleware fix | 704 | 121 | 83% |

| PostgreSQL connection setup | 2,347 | 380 | 84% |

| Average across 10 tasks | 1,214 | 294 | 65% |

Range is 22 percent to 87 percent depending on the task. Average output reduction sits around 75 percent. There is also a secondary tool called caveman-compress that rewrites memory files into caveman speak at session start, which claims 37 to 60 percent reduction on documentation. These are not marketing numbers. They are straightforward to reproduce yourself.

Why this actually matters

I want to be clear about what Caveman is and is not. It is not a way to make the model smarter. It is not a prompt engineering hack. It is a way to stop paying rent on tokens you do not need.

Context windows are a zero sum game. Every token the model spends saying "I hope this helps, let me walk you through" is a token it cannot spend holding your actual codebase in scope. In a long session, verbose responses push useful context out the back of the window. You are not just paying for fluff. You are losing working memory to it.

There is also a more interesting angle buried in the README. It references a March 2026 study called "Brevity Constraints Reverse Performance Hierarchies in Language Models" which found that constraining models to brief responses improved accuracy on certain benchmarks by 26 percentage points. Brevity is not just cheaper. It may also be more correct.

That lines up with my own instinct. When a junior engineer asks me to explain something and I force myself to answer in one sentence, I have to actually understand what I am saying. Filler is where sloppy thinking hides. Models behave the same way.

The other bit I like is that Caveman only compresses output tokens. Thinking and reasoning tokens are left alone. So you keep the model's full chain of thought and cut only the performative preamble at the end. That is the right tradeoff.

Try it

Installation is a one liner no matter which harness you live in.

# Cursor, Windsurf, Cline, Copilot (and most others)

npx skills add JuliusBrussee/caveman -a <agent-name>

# Claude Code

claude plugin marketplace add JuliusBrussee/caveman

claude plugin install caveman@caveman

# Gemini CLI

gemini extensions install https://github.com/JuliusBrussee/caveman

# Codex

# clone the repo and search the local marketplace

You toggle it with /caveman (or $caveman on Codex), or just by telling the model "talk like caveman." Turn it off with "stop caveman" or "normal mode." There are four intensity levels. Lite keeps grammar intact. Full drops articles and hedging. Ultra uses abbreviations. Wenyan compresses into classical Chinese literary style, which is the most efficient token wise and also the most unhinged, and I respect that.

The bigger idea

Caveman is a joke with a point. The joke is that your LLM has been doing customer service theater this whole time, apologizing and hedging and padding answers because that is what the training data rewarded. The point is that you do not owe a compiler a polite greeting. You need the answer.

If the next generation of agents is going to spend all day in long running loops with shared context, the economics of every token start to matter in a real way. Not in a "save a few cents" way. In a "fit more of the problem in your head at once" way. Context is thinking space. Treat it like one.

Also it is just funny to watch your assistant of choice drop into caveman mode and tell you "use useMemo. done." I have not gotten tired of it yet.

References

- Caveman on GitHub: https://github.com/JuliusBrussee/caveman

- Brevity Constraints Reverse Performance Hierarchies in Language Models (March 2026), referenced in the Caveman README